4 Automated Testing Principles to Make Autotests More Reliable

The author of this article is Oleksandr Podoliako, Lead Software Test Automation Engineer at EPAM.

Introduction

I have been working in the QA field for almost six years, and the biggest challenge that I see in automated testing is the absence of result certainty. A failed test does not provide sufficient assurance that we have found a defect. This is because there are many possible reasons for failure, including issues with the environment or tools, eligibility changes due to application development, and poorly designed tests.

According to a survey conducted at the end of 2021, around 59% of survey recipients experienced “flaky” tests on a regular basis. A test failure requires human attention, which makes feedback time longer. The worst part is that automated tests are no longer automatic. So, how can we improve this situation?

In this article, I briefly outlined four principles that can make automated tests more reliable. These principles are based on my experience in the industry, and I hope they will help you get more accurate test results and save time and resources for your team.

Principle 1: Isolation

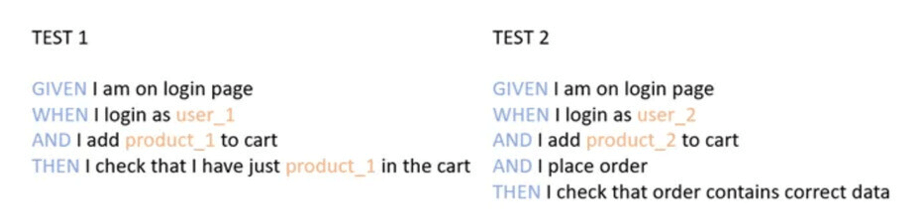

Tests should be isolated as much as possible from the outer environment and from other tests. I often observe that one test indirectly, or even directly, affects another. And I have seen numerous instances when some global environment configuration impacts a group of tests. Of course, isolation capabilities depend on an application. One approach is to use unique data for each test, such as different users, products, etc. It is also a good idea to analyze where and how different tests can interact with each other and reduce these interactions. The ultimate solution is to use different environments.

In the example below, the tests are isolated by using unique testing data. Without isolation, TEST 1 could be affected by TEST 2.

Principle 2: Managing application state

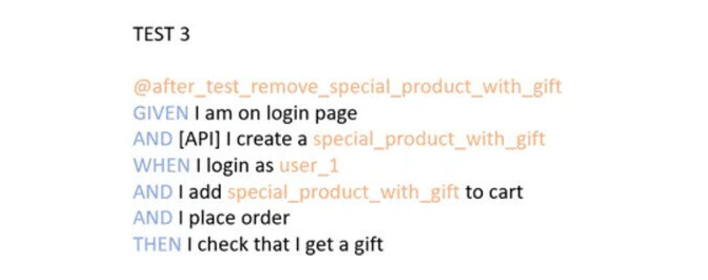

An engineer should be able to manage the application state through the API or directly with database interaction. All external services should be mocked. This gives us predictable application behavior and ensures that the application has the correct state when the test starts.

This principle also helps create small, atomic tests that are more readable and faster.

In the example below, a special product is created through the API, and we can be confident that the product is available. The product is removed after the execution of TEST 3 to maintain a clean environment.

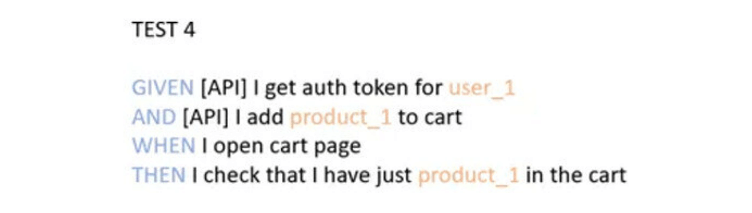

Principle 3: API over UI

This principle is heavily based on the previous one. The aim is to use the user interface only on a page where we want to check something. A proxy is a tool that helps us discover what happens under the hood and then simulate it with API and database interactions.

In the example below, the required authentication token is obtained through an API. The product is also added through the API, and we open just one page where we perform some checks.

Principle 4: Functionality-driven test coverage

The UI is often subject to frequent changes during the development stage. I believe this problem could be solved by addressing only the core functionality during development. It is less likely that the ”buy” button will disappear on a page meant for selling something.

Conclusion

In conclusion, I want to mention that the above principles are based on my personal perspective and are intended as advice, rather than rules. You can choose to follow one or none of these principles, depending on your specific situation.

Of course, following these principles will require additional effort, and you will need to have a special application design that allows for isolation and state management.

I believe, however, that following them will ultimately make the work easier for all team members, from QA to developers, and, most importantly, will improve product quality.

.png)